Understanding MLOps Workflows for Production AI

Machine Learning Operations (MLOps) is a set of practices that combines machine learning, DevOps, and data engineering to deploy and maintain ML models in production reliably and efficiently. As AI becomes central to many business applications, having a structured MLOps workflow is crucial to ensure models deliver consistent value.

Why MLOps Matters in Production

- Consistency: Automates model training, testing, and deployment, reducing human error.

- Scalability: Enables managing multiple models and datasets at scale.

- Collaboration: Bridges the gap between data scientists and operations teams.

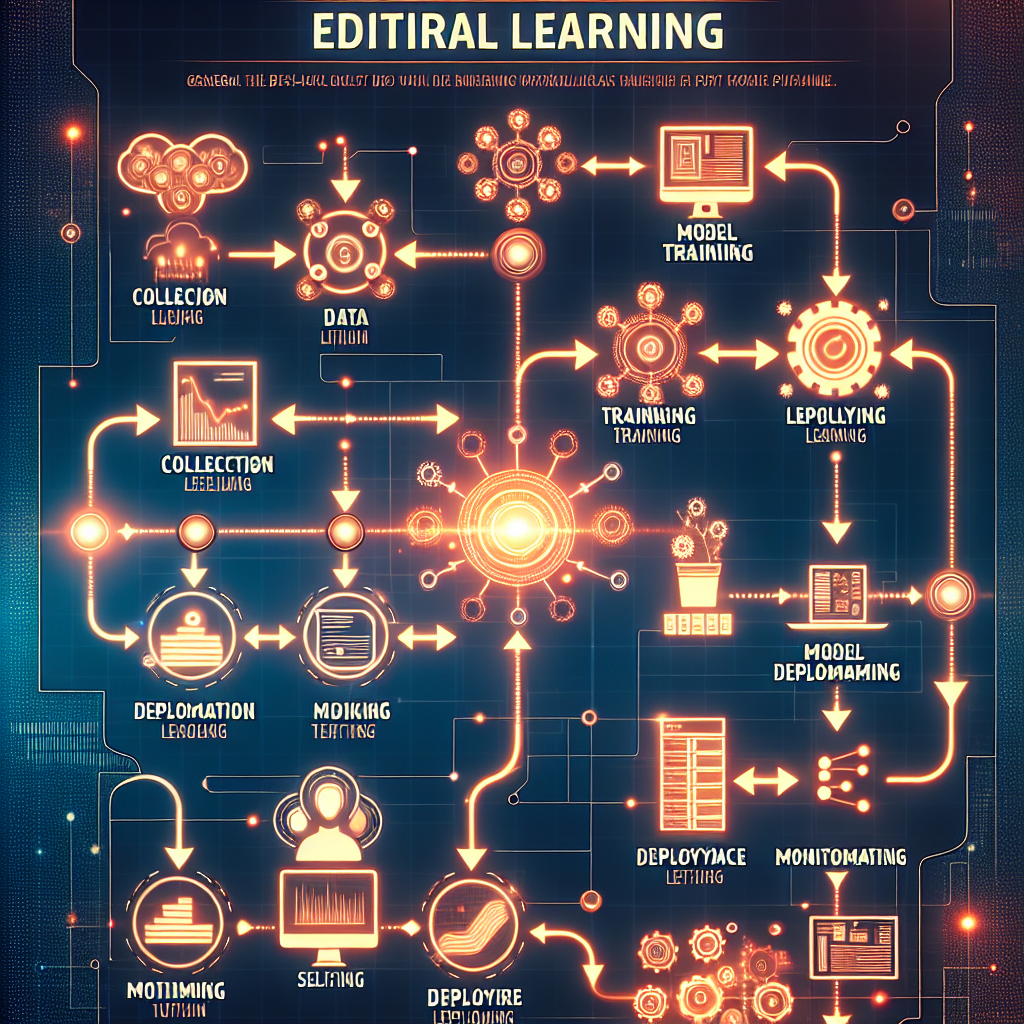

Key Stages of MLOps Workflows

1. Data Collection and Management

Data is the foundation of any ML model. This stage involves:

- Gathering diverse and relevant datasets.

- Ensuring data quality and integrity.

- Managing data storage and versioning.

Tools like Apache Kafka or cloud storage solutions help streamline this process.

2. Model Development

Data scientists explore and train models using:

- Feature engineering.

- Algorithm selection and tuning.

- Experiment tracking for reproducibility.

Platforms such as TensorFlow, PyTorch, or MLflow assist in managing experiments.

3. Continuous Integration and Testing

CI pipelines automate:

- Code integration from multiple contributors.

- Unit and integration testing for model code.

- Validation of model accuracy and performance.

This phase ensures that new code and models do not introduce regressions.

4. Model Deployment

Deployment involves:

- Packaging the model.

- Deploying to serving infrastructure (cloud, edge, or on-prem).

- Managing versions and rollback capabilities.

Using containerization tools like Docker and orchestration platforms like Kubernetes is common.

5. Monitoring and Maintenance

Once models are live, monitoring is essential to:

- Track model performance and drift.

- Detect data quality issues.

- Trigger retraining or alert teams when needed.

Tools like Prometheus or custom dashboards support this continuous monitoring.

6. Feedback Loop and Retraining

Production data and user feedback help:

- Identify model weaknesses.

- Update datasets.

- Retrain models to improve accuracy and relevance.

Automated retraining pipelines ensure the model adapts to new patterns over time.

Best Practices for MLOps Workflows

- Version everything: Code, data, and models must be versioned.

- Automate pipelines: Reduce manual steps to minimize errors.

- Collaborate actively: Use shared platforms and documentation.

- Implement robust testing: Validate both code and model quality.

- Monitor continuously: Real-time alerts prevent silent failures.

Challenges in MLOps

- Managing data privacy and compliance.

- Handling model interpretability for complex AI.

- Balancing speed of deployment with thorough testing.

Addressing these requires careful planning and the right toolset.

Conclusion

Effective MLOps workflows are vital to unlock the full potential of AI in production environments. They ensure models are reliable, scalable, and continuously improved. By following structured stages and best practices, teams can reduce risks and accelerate AI-driven innovation.

Looking to streamline your personal business presence? Meetfolio offers easy-to-create personal business card pages and booking calendar setups. Visit https://meetfolio.app to get started and boost your professional interactions today.

Need a professional online presence? Create your personal business card page and booking calendar easily with Meetfolio at https://meetfolio.app.